🎉 ChatGPT Images 2.0, Claude Design launch, Codex super app shift, Opus 4.7 release, Meta employee data capture

AI leaders are rapidly converging toward full-stack, agent-driven platforms while simultaneously escalating competition at the frontier, and raising new ethical concerns around training data.

Welcome to this week’s edition of AImpulse, a five point summary of the most significant advancements in the world of Artificial Intelligence.

Here’s the pulse on this week’s top stories:

OpenAI — ChatGPT Images 2.0

What’s happening

OpenAI released ChatGPT Images 2.0, a major upgrade to its image generation model that had already been gaining viral traction in testing. The company is calling it its most advanced image model to date.

Details

The model uses a “thinking” step before generation, enabling planning, web reference lookup, and output validation.

It ranks No.1 on Arena AI’s text-to-image leaderboard, outperforming competitors like Nano Banana 2 across all categories.

Supports 2K resolution, batch generation of up to 8 images, flexible aspect ratios (3:1 to 1:3), and multilingual text rendering.

Sam Altman compared the leap to “going from GPT-3 to GPT-5,” with availability across ChatGPT, Codex, and API.

Why it matters

This marks OpenAI’s return to the top tier of image generation after a period of lagging competitors. More importantly, “thinking” image models shift creative workflows, enabling higher reliability and entirely new forms of visual ideation.

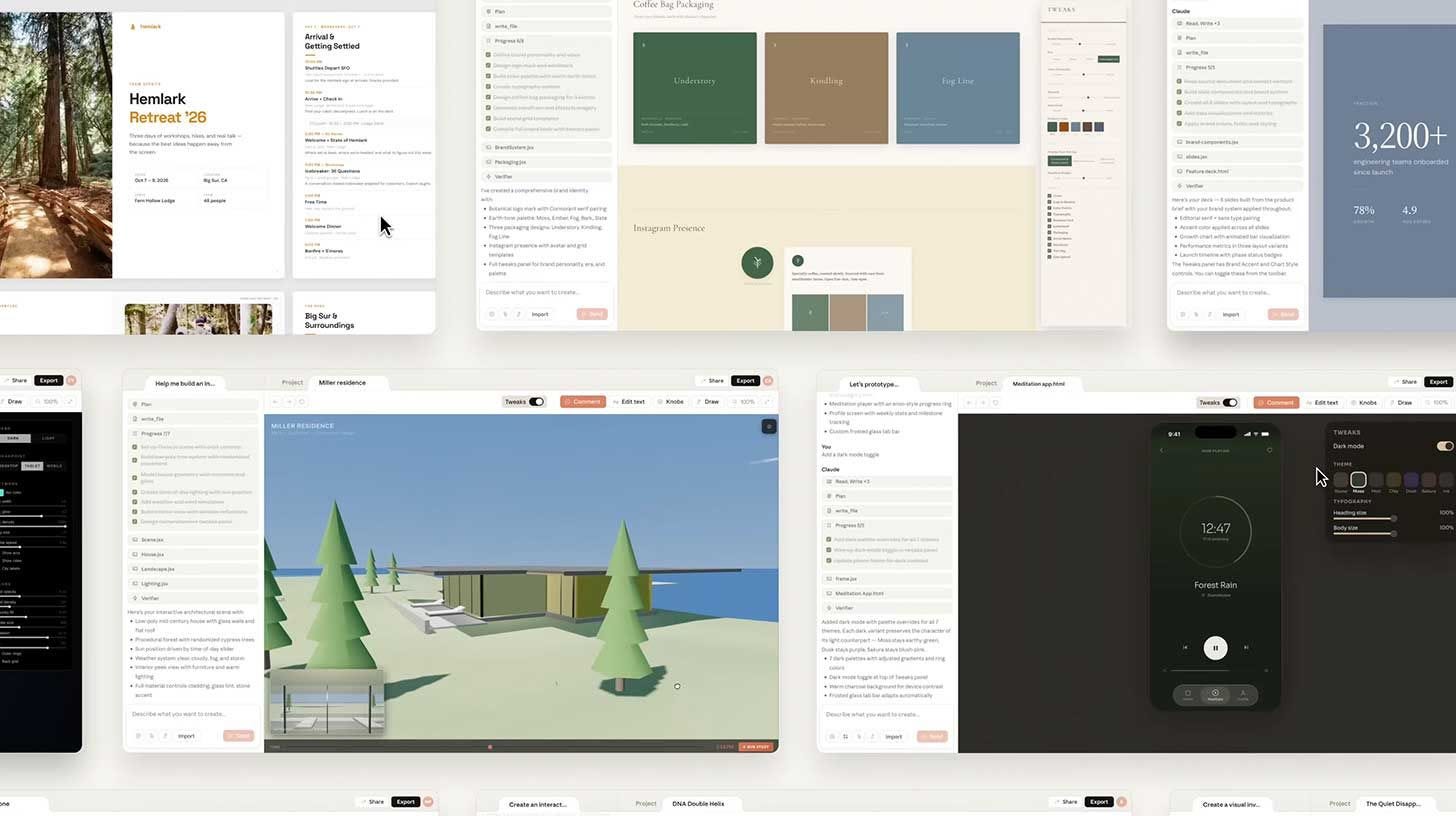

Anthropic — Claude Design

What’s happening

Anthropic launched Claude Design, a tool that turns prompts, screenshots, and codebases into fully interactive design outputs. It is powered by the company’s new Opus 4.7 vision model.

Details

Claude ingests codebases and mockups to generate a reusable brand system applied across projects.

Users can iterate via chat, inline comments, direct edits, or auto-generated UI controls for layout and styling.

Outputs can be exported to formats like Canva, PPTX, PDF, or HTML, or handed off to Claude Code as build-ready assets.

CPO Mike Krieger stepped down from Figma’s board days before launch amid competition rumors.

Why it matters

Anthropic is collapsing the design lifecycle, from concept to production, into a single AI-native workflow. This signals a broader trend of design, development, and collaboration converging into unified AI platforms.

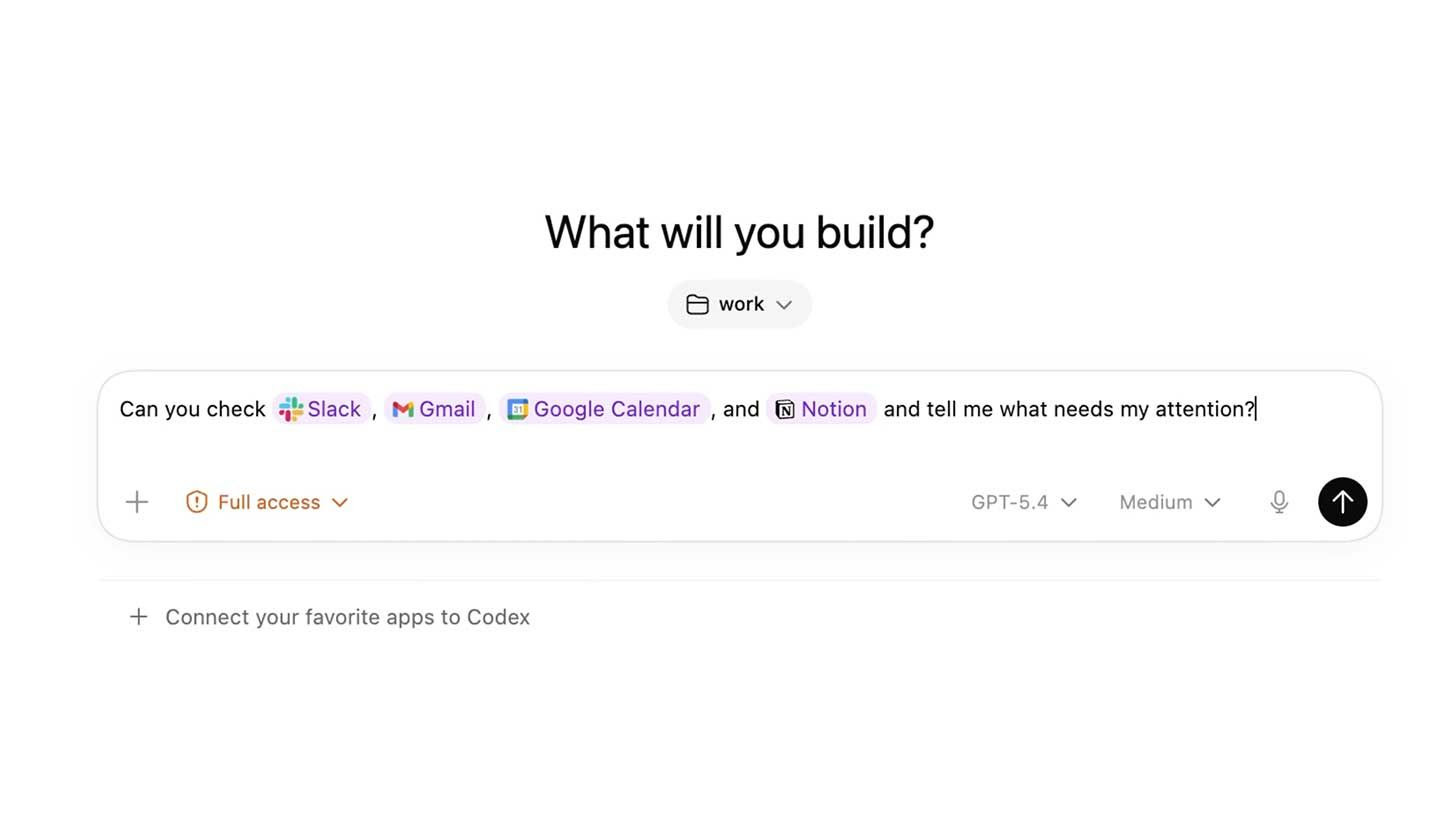

OpenAI — Codex Expansion

What’s happening

OpenAI has overhauled Codex into a unified application combining ChatGPT, Atlas, and Codex into a single environment. The platform now extends far beyond coding into general-purpose agentic workflows.

Details

Background computer use allows agents to operate Mac applications autonomously, even without APIs.

Parallel agents can execute multiple tasks simultaneously across environments.

Memory (preview) enables persistent context, while automations allow long-running workflows across days.

Codex reached 3M weekly users with 70% MoM growth, with leadership positioning it as a “super app.”

Why it matters

This is OpenAI’s clearest move toward an integrated AI “superapp” competing directly with Anthropic’s ecosystem. The shift reframes Codex from a coding assistant into a general operating layer for digital work.

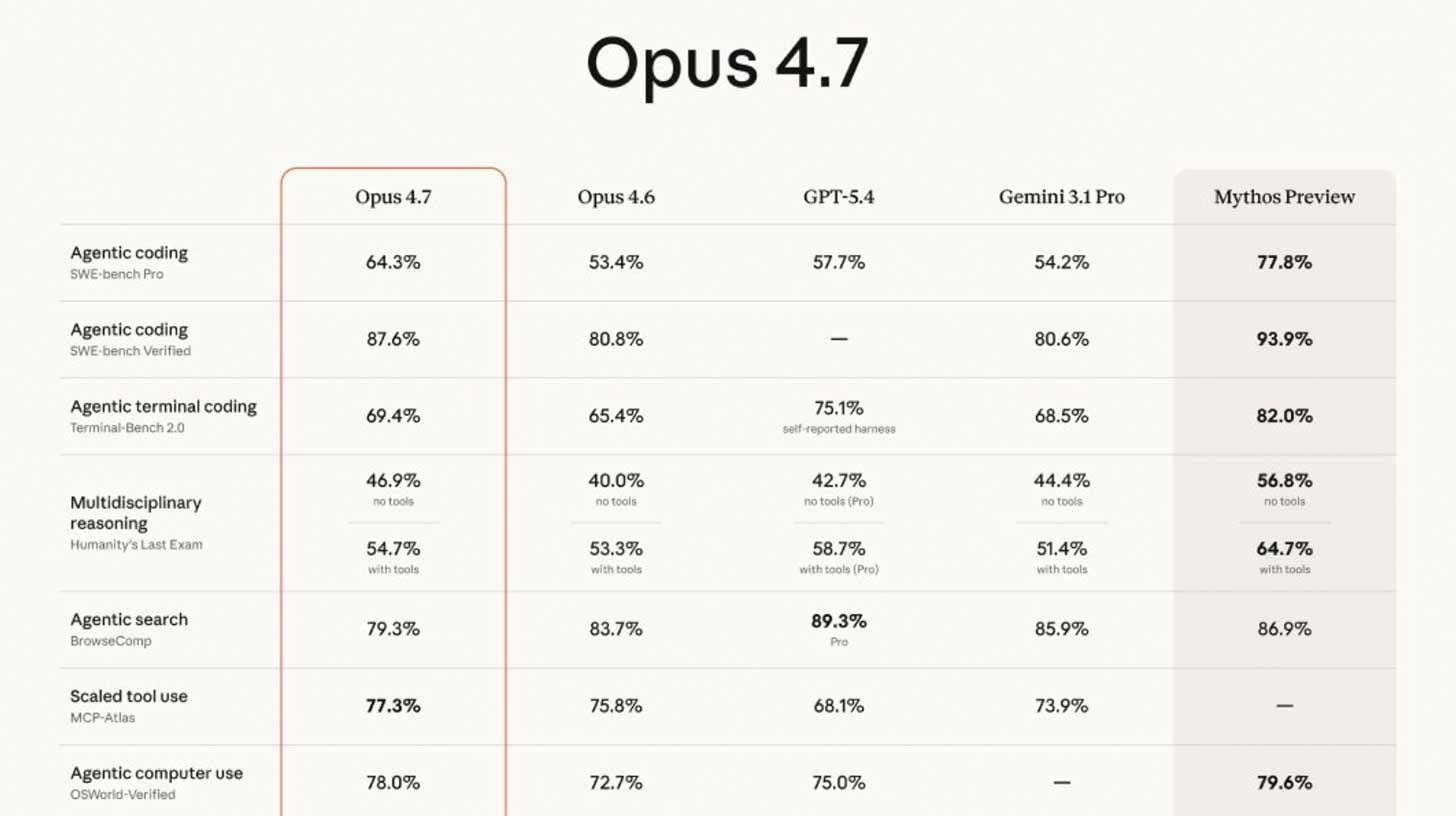

Anthropic — Claude Opus 4.7

What’s happening

Anthropic released Claude Opus 4.7, its latest flagship public model focused on agentic coding performance. It outperforms major competitors on benchmarks but trails Anthropic’s internal frontier model.

Details

Achieves 64.3% on SWE-bench Pro, up from 53.4% in 4.6, while internal Mythos Preview leads at 77.8%.

Pricing remains unchanged from 4.6, though token consumption is significantly higher.

Introduces new tooling like “xhigh” effort mode and an

/ultrareviewcommand for bug detection and design critique.Early user feedback is mixed, following complaints about degraded performance in 4.6.

Why it matters

Anthropic is now operating a dual-track model strategy: rapid public releases alongside a gated frontier tier. This creates a widening gap between what developers can access and where the true cutting edge resides.

Meta — Model Capability Initiative (MCI)

What’s happening

Meta has launched an internal initiative to collect employee activity data—including screenshots, keystrokes, and mouse movements, for AI training. The program has no opt-out and has triggered internal backlash.

Details

Data collection focuses heavily on developers, capturing workflows across tools like VSCode, Google Chat, and Gmail.

Internal communications confirm employees cannot opt out, per CTO Andrew Bosworth.

The initiative overlaps with layoffs affecting ~8,000 employees, whose workflows are being recorded ahead of departure.

Meta positions the effort as passive contribution to improving its AI models through everyday work.

Why it matters

Meta is applying robotics-style human data capture to software workflows, effectively turning employees into training inputs. Combined with layoffs, the move raises serious ethical and cultural concerns about surveillance and consent in AI development.